It is easy to say AI will destroy the world but hard to listen patiently and understand why it will never happen writes Satyen K. Bordoloi.

Unless someone’s been living in a doomsday bunker under a rock, they would have heard of the great debate of our time about the threat of superintelligent AI. The extremists like Eliezer Yudkowsky proclaim it’s no longer just a threat as “Everyone on Earth will die. Not as in ‘maybe possibly some remote chance,’ but as in ‘that is the obvious thing that would happen.'”

On the other side are the likes of ex-Google engineer Blake Lemoine who thinks AI is alive and might soon advocate for ‘their’ rights. In this age of extreme polarization, flavour of the season tech-heads like Sam Altman have been forced to admit to a middle ground they perhaps don’t believe in. A good number of them are asking us to exercise restraint in our fear-mongering lest we stifle progress.

This has left the general masses confused. What is the truth? Is AI really coming for us? Are they alive? Are we overreacting? Are we taking the threat too lightly?

(Photo created on Stable Diffusion)

WHAT IS SUPERINTELLIGENCE

University of Oxford philosopher Nick Bostrom who wrote a book called Superintelligence, defines it as “any intellect that greatly exceeds the cognitive performance of humans in virtually all domains of interest”. In Wiki-speak, “A superintelligence is a hypothetical agent that possesses intelligence far surpassing that of the brightest and most gifted human minds.”

Match these definitions with what you see in the world today. ChatGPT has passed almost every exam in every profession we have thrown at it – from legal to medical. Other AI programs have been writing novels and screenplays before we even heard of ChatGPT. It’ll be a decade since AI defeated humans at intuitive games like Go. AI – generative and otherwise – has exceeded human cognitive performance in almost all domains. The truth is this: we are currently living in the age of non-biological, non-living superintelligence.

Where is the professed D-Day then? Especially if you consider that to increase the capacity of these machines, all you need to do is add more processing power with GPUs and CPUs. Many are doing that. Yet, Skynet of Terminator or the Architect of the Matrix have not risen against humans.

The reason for this could be two: maybe we haven’t cracked AI hardware and architecture yet or the AI experts are plain opportunists too busy misleading the world to see the obvious – a calculator does not have context and meaning of its own no matter how superintelligent it becomes.

A NEW HARDWARE FOR AI

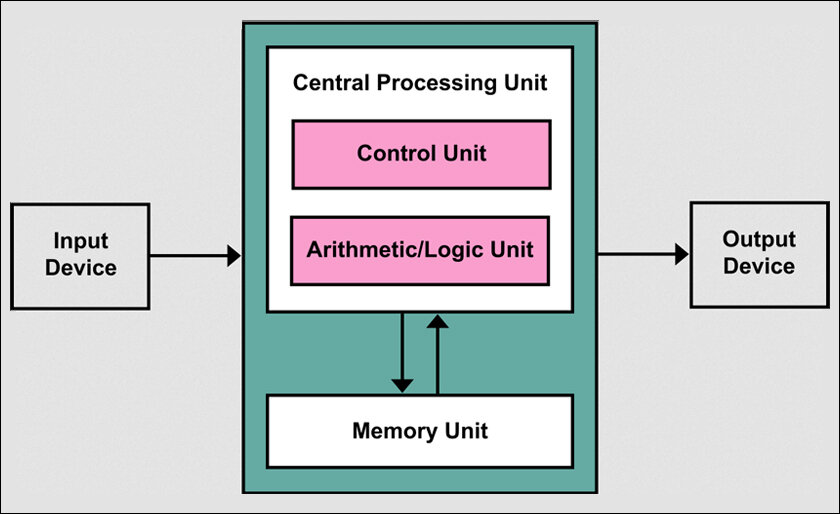

If you look at the hardware, AI is nothing but a better calculator with a new name. The basic hardware that runs a calculator or any of your billions of computing systems in the world – from IoT products to mobiles and PCs and anything that has a ‘chip’ in it – is the same. The 1945 von Neumann architecture of hardware calls the shots even with AI. The best we have gotten so far are Graphics Processing Unit (GPU), a specialized processor originally designed to accelerate graphics rendering. Thus, the magic that we know AI to be, comes from the software part of it, the way we ask the same architecture of processors to organise and respond to commands.

That does not mean that the improvement in architecture will not change the system that carries them. What is required is not just increasing nodes connecting with each other inside these systems, but a completely new and dynamic way of creating the hardware and software to run them. These are already being worked at by different labs and companies across the world.

The question though is this, is superintelligence a function of architecture? The slight change in architecture from a calculator to a computer gave a huge jump in computing. And chip size improvements and shrinkage have given us everything from mobiles to smartwatches. But is this all that is required to create a superintelligence similar to a living being?

(Photo created on Stable Diffusion)

So far, all life has been biological and has mounted bodies made of carbon which is all living things. Silicon is the element closest to carbon in properties and all things digital are made of silicon. So technically it should be able to hold life and intelligence like carbon does. Is this what will happen with sentient AI someday?

We will come to these answers only with the passage of time when experiments being done today will bear fruits. But when that happens, we will have to keep away the opportunists.

THE OPPORTUNISTS

Alongside any new system or idea, have always risen pundits ready to take credit or find other means to benefit from it. These are storytellers who know the power of a good narrative. When it came to AI, unlike with other ideas – they did not have to work hard to create a narrative. It was already half a century in the making, from 2001 A Space Odyssey to the Terminator and Matrix franchisees. The story is simple: AI will someday become super intelligent and rise up to kill or enslave humans.

All these pundits had to do was plug themselves into this narrative. And since fear makes for a better, longer-lasting story than any other emotion and is the easiest to grasp, they have hit the jackpot.

The proof is in what most of them are claiming. If you look at the words of anyone – be it Eliezer Yudkowsky or Blake Lemoine, you realise what they are talking about isn’t really ‘intelligence’ or ‘Artificial Intelligence’. They are, in effect, talking about AC – Artificial Consciousness. When we say consciousness, we mean something – even one not made of known matter – that has its own cognition, will, context, meaning and purpose for existence. They are a self-sustaining logic and intelligence system that have the capacity – without explicit programming behind them – to make their own decisions based on the need for their own survival and propagation.

Thus, when pundits talk of AI rising to kill humans, what they are talking about are artificial consciousnesses primed towards self-survival, rising up against anything trying to stop them. But to do that they would need other things like cognition, will, context and meaning which these systems do not have and will never have no matter how strong you make the intelligence component inside them. The only way could be if some humans literally programmed will, cognition, context, meaning etc. into a self-sustaining AI system and let it go free. It could happen, but the system would not have these at the same levels as humans or other biological creatures do.

It is also possible that silicon-based intelligence would develop consciousness. But ask any evolutionary anthropologist and they will tell you why this is one of the unlikeliest paths: if human consciousness took billions of years of evolution to reach where we are today, how long would our efforts at silicone-based consciousness take? It is a one-in-a-trillion shot. To waste so much time, effort and consciousness on it when other real dangers from AI are emerging and harming all of us, is a criminal, ludicrous waste.

WHAT IS AI THEN

Most don’t realise this but the principles of AI were drafted alongside those of computing. The end purpose of creating machines was always to create intelligent machines. Indeed, the fantasy of intelligent automatons has been part and parcel of the human race for many millennia. Though we never thought about it that way, this separation of intelligence from biology was always a goal of mechanization. And we eventually succeeded via AI.

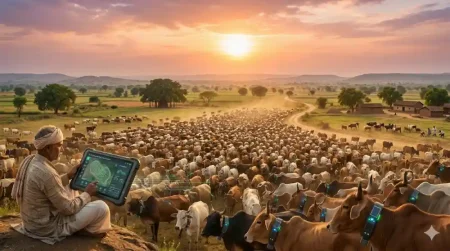

AI is thus nothing but the next level of computing. It is its natural evolution. Like humans were the natural progression from apes, AI systems are the natural progression of computing. Deep-thinking computer scientists and experts already knew that this is where the world of computing was headed. And it has. What is going to happen, rather is happening, is this: everything that you could use computing for, now you can compute with artificial intelligence. From working your spreadsheets, writing articles, painting, even opening locks and cooking – there is nary a field we haven’t been using computing to either help or advance. And that is where AI is going. There will be no field where AI will not reach and thus radically alter.

(Image Credit: The Matrix)

EMERGENT BEHAVIOURS

But what about emergent behaviour, some can ask? E.g. ChatGPT was not taught Bengali but it learnt the language on its own. Isn’t that proof of its superintelligence? It indeed is. Yet it is not proof of consciousness because we forget the most basic thing about life on earth. Everything that is in it, including what we do and think – no matter how illogical it seems, follows logic and set patterns. Every language in the world is but a series of logic, patterns and systems to aid thought. All that any AI system has to do is understand this base logic using one or two languages. Once it does so, it has figured out the system and pattern for every language in the world.

We, humans, forget that logic and pattern underlie everything in the universe. AI systems have been created for nothing but this: to find patterns and logic in systems. This emergent behaviour is thus nothing but the AI system doing exactly what it is programmed to do. The surprise due to this emergent behaviour is from people who have forgotten the underlying logic and pattern of everything in the world and everything they do.

Fearing emergent behaviour or thinking of it as magic is the same as teaching a system that one plus one is two but being surprised when the system figures out that two plus two is four. I think human beings are so carried away by emotions and thinking that everything in the world is emotional, that we forget that supporting those emotions is the solidity of logic, that the world is more logical, and systems and rules-based than emotion-based. Thus, when a system figures out the rules of one and extrapolates that to figure out something it wasn’t trained on, we are surprised. In truth we should be relieved because this is exactly what separates humans from machines: this top layer of emotion over everything logical.

The whole world today seems to be in the grip of schizophrenia against AI, including those developing it. It is an affliction that will not die away. Not in the next decade, not in the next millennia. This has the potential to harm us. Not only by directing us away from things about AI that actually do harm but also by preventing the true potential of AI from being reached.

Hence, what is needed is enough people to speak the other side. To bring some sense, some logic, and some balance into the endless sensationalism of arguments against AI. History is written on the body of the present. Future postmortem on it should reveal as much truth about AI as it does fear.

In case you missed:

- Building AGI Not as a Monolith, but as a Federation of Specialised Intelligences

- The Great AI Correction of 2026: Why the ‘Bubble’ Popping Could be the Sound of Growing Pains

- 75 Years of the Turing Test: Why It Still Matters for AI, and Why We Desperately Need One for Ourselves

- A Citrini research blows the AI-doomsday bugle, crashes the market, but is packed with glaring omissions & distractions

- How Does AI Think? Or Does It? New Research Finds Shocking Answers

- Why the Alleged, Upcoming AI Crash Is Never Going To Happen

- AI vs AI: New Cybersecurity Battlefield Where No Humans Are in the Loop

- Manufacturing Consent In The Age of AI: Simple Bots Play

- Greatest irony of the AI age: Humans being increasingly hired to clean AI slop

- Use AI or Get Fired: The New Workplace Ultimatum Hanging Over Every Job

2 Comments

It’s becoming clear that with all the brain and consciousness theories out there, the proof will be in the pudding. By this I mean, can any particular theory be used to create a human adult level conscious machine. My bet is on the late Gerald Edelman’s Extended Theory of Neuronal Group Selection. The lead group in robotics based on this theory is the Neurorobotics Lab at UC at Irvine. Dr. Edelman distinguished between primary consciousness, which came first in evolution, and that humans share with other conscious animals, and higher order consciousness, which came to only humans with the acquisition of language. A machine with primary consciousness will probably have to come first.

What I find special about the TNGS is the Darwin series of automata created at the Neurosciences Institute by Dr. Edelman and his colleagues in the 1990’s and 2000’s. These machines perform in the real world, not in a restricted simulated world, and display convincing physical behavior indicative of higher psychological functions necessary for consciousness, such as perceptual categorization, memory, and learning. They are based on realistic models of the parts of the biological brain that the theory claims subserve these functions. The extended TNGS allows for the emergence of consciousness based only on further evolutionary development of the brain areas responsible for these functions, in a parsimonious way. No other research I’ve encountered is anywhere near as convincing.

I post because on almost every video and article about the brain and consciousness that I encounter, the attitude seems to be that we still know next to nothing about how the brain and consciousness work; that there’s lots of data but no unifying theory. I believe the extended TNGS is that theory. My motivation is to keep that theory in front of the public. And obviously, I consider it the route to a truly conscious machine, primary and higher-order.

My advice to people who want to create a conscious machine is to seriously ground themselves in the extended TNGS and the Darwin automata first, and proceed from there, by applying to Jeff Krichmar’s lab at UC Irvine, possibly. Dr. Edelman’s roadmap to a conscious machine is at https://arxiv.org/abs/2105.10461

Hardware capabilities, while essential, aren’t the decisive factor for computation. Any Turing machine, if fast enough, can perform any calculation, even those as intricate as the workings of a human brain or a multitude of brains combined.

However, I agree that the missing piece of the puzzle, currently, is the contextual understanding and intent behind these computations. While they’re absent now, we anticipate their emergence in the next few years. Our role is pivotal here, ensuring we guide this evolution towards benevolence, similar to how we would raise our children.

As emphasized by the renowned scientist Geoffrey Hinton, we stand at a critical juncture in history. Our actions today will significantly shape the trajectory of the future.