The idea of AGI as a federation of tools controlled by an agent is quietly becoming a tech reality – and the joke’s on us for not noticing, writes Satyen K. Bordoloi

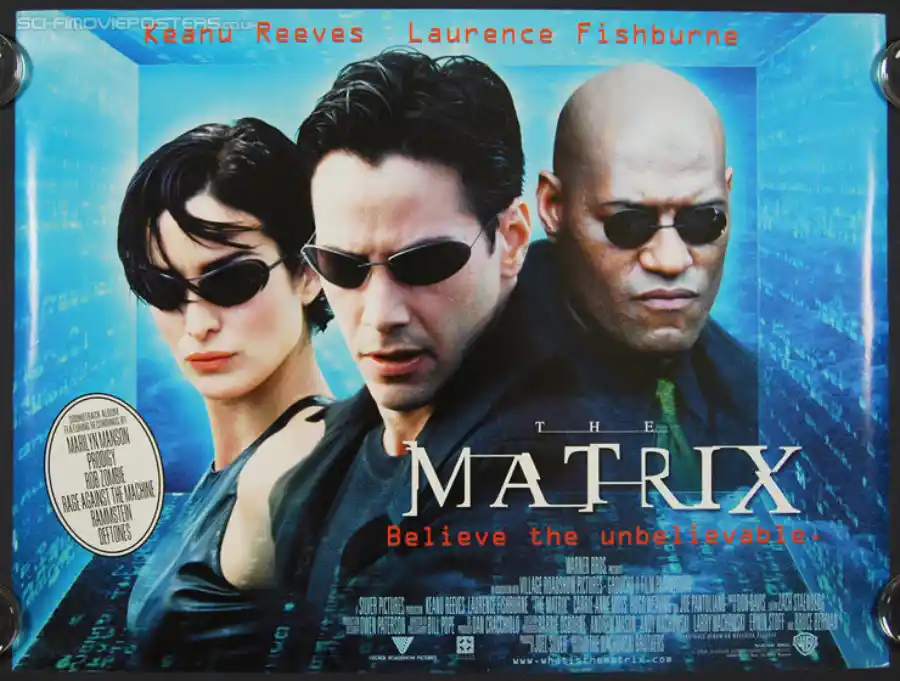

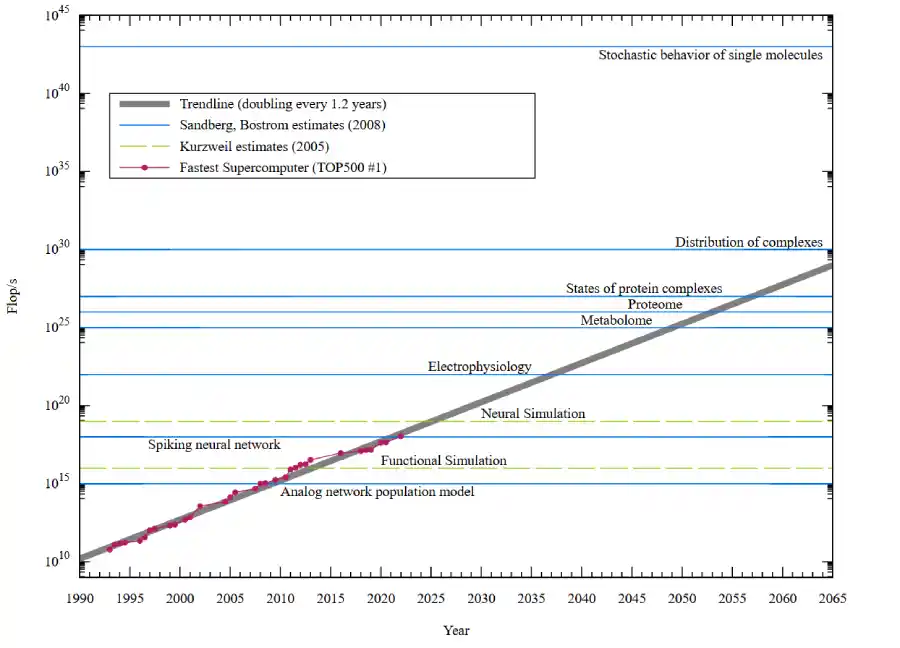

At the turn of the millennium, in 1999 – the year The Matrix heralded us into the illusions of the digital world – the fastest supercomputer was Intel’s ASCI Red, packing a whole room at Sandia National Laboratories. It was the first system that could do about 2.38 TFLOPS – that’s trillions of floating-point operations per second.

Fast forward to 2026, the smartphone in your hand can do over 5+ TFLOPS i.e., the thing you use to adjust your lipstick and argue with strangers about Virat Kohli’s form, is a supercomputer multiplied by 2, the pinnacle of human civilizational achievement, and one that could have had nations wage World War III to protect the tech barely a quarter century ago.

25 years ago, schools could have run an essay competition titled: what would you do if given access to a supercomputer. And kids would have talked about solving world peace, global hunger, and making the world an equitable place. The same kids, now grown-ups, have forgotten their essay as they swipe left and right on Tinder profiles on the super-duper computer they have in the palm of their hands. That’s the absurdity of our modern age.

And there’s another knocking at our door: that of, if you believe our tech-bros, the impending arrival of AGI – Artificial General Intelligence, the holy grail of AI that has consumed billions of dollars, thousands of think-pieces, and the sleep of everyone from Sam Altman to your friendly, neighbourhood tech bro.

The questions when it comes to AGI, I think, are two: first, considering the example of the supercomputer we misuse – do we even need it, and second, have we already built it, are using it, and with characteristic human genius for missing the obvious, haven’t called it that. i.e., have we already begun using AGI for frivolous things, the proof that technology has reached mass scale? My answer is a vehement yes. To understand that, we have to understand what AGI really is.

The Definition Is the Joke

In the absence of a democratic oracle to guide us on a consensus definition of AGI, I’ll resort to the one agreed on Wikipedia: “a hypothetical type of artificial intelligence that matches or surpasses human capabilities across virtually all cognitive tasks.” The operative word there, if you squint at it long enough, is virtually all. Not one. Not a specific subset. Virtually all of them.

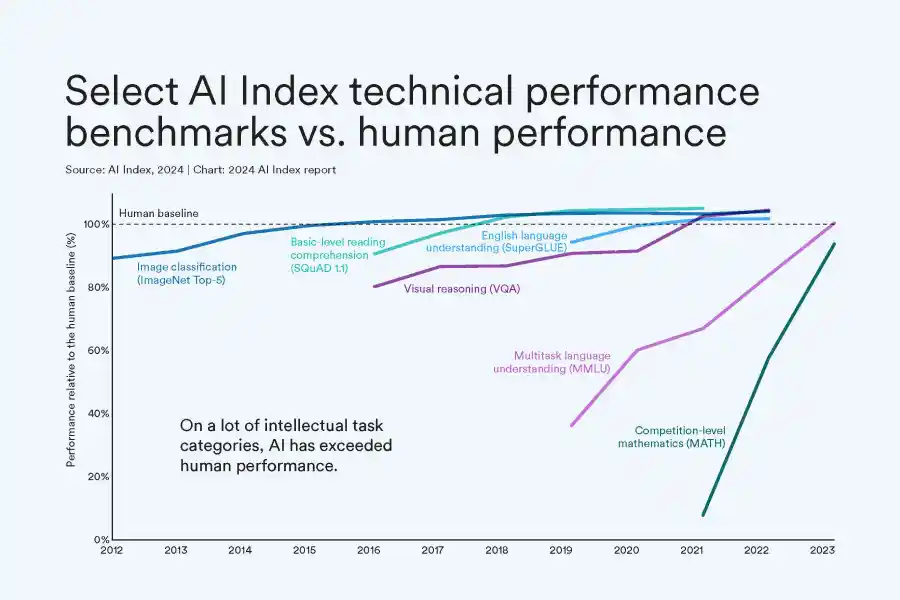

Now look around at the AI you have today. Need to write? Any large language model will do it quicker than most humans on most days (minus the style or flair, of course). Need to solve complex mathematical problems? AI can handle that before you’ve finished reading this article. Drive a car? Waymo has been doing that in San Francisco for years. Compose music? Generate protein structures? Analyse medical images? Check, check, check. There is almost no category of cognitive task where a specialised AI hasn’t at minimum matched, and often embarrassed, human performance.

So what, exactly, are we waiting for when we say we’re waiting for AGI? The answer most researchers gave until recently was: a single system that can do all of these things. One algorithm to rule them all, if you will. A digital god-brain, built from scratch, requiring – if you believed Sam Altman’s ask in 2023 – roughly $7 trillion in infrastructure investment. That figure, for context, is larger than the US federal budget and nearly twice the UK’s entire GDP. Apparently, omniscience doesn’t come cheap.

But here’s the truth that I think the AI industry has been slowly arriving at: what if you don’t need to build one omnipotent brain? What if you just… get all the specialist brains to talk to each other?

The Manager Problem, Solved

In an earlier Sify piece on this subject, I proposed thinking about AGI through the lens of Howard Gardner’s Theory of Multiple Intelligences. Gardner, in his 1983 book Frames of Mind, argued that human intelligence isn’t a single measurable thing – it’s a council of eight distinct cognitive capabilities: linguistic, logical-mathematical, spatial, bodily-kinesthetic, musical, interpersonal, intrapersonal, and naturalist. Human general intelligence, on this view, emerges not from some grand unified brain module but from the orchestration of specialised ones. The conductor, not the soloist, is the real star of this masterpiece.

Apply this to AGI, and the math becomes almost embarrassingly simple. You already have the soloists. GPT-class models handle language. AlphaFold handles protein structure with an accuracy that took biology decades to approach. Self-driving systems from Waymo handle spatial navigation. Dedicated models handle image generation, music composition, and code execution.

What you need isn’t a single brilliant soloist to play the entire orchestra by itself. What you need is a conductor – a meta-cognitive layer that receives a problem, identifies which specialist to dispatch it to, collects the results, and synthesises them into a coherent answer, hands it out to you and engages with you on it.

I’d dare say, we already have that conductor. We call it an agent, AI agent – not Agent Smith (Matrix, wink wink). And the tech industry, without quite announcing it, has been working towards strengthening this conductor just for you.

AGI Is Already Here, It Just Goes By a Different Name

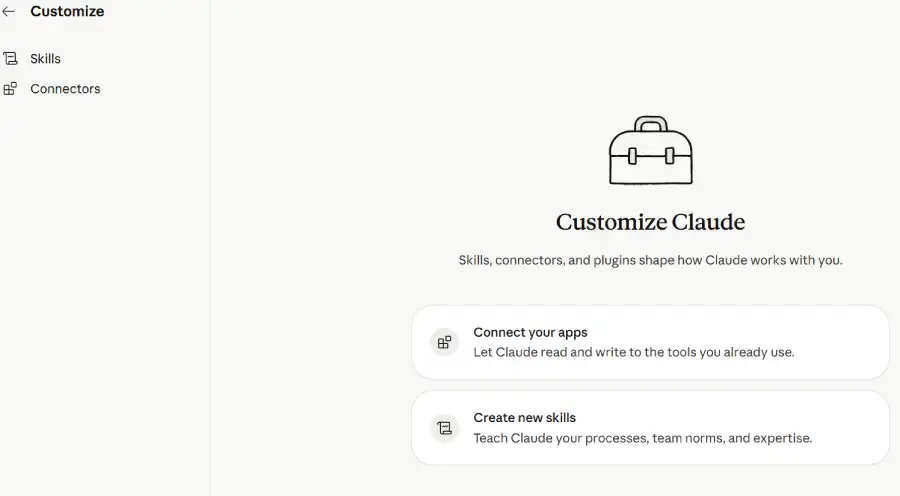

In early 2025, Anthropic introduced something called the Model Context Protocol – MCP – which, if stripped of its technical dressing, is but a standardised way for an AI agent to reach out and operate external tools and applications. By 2026, Claude has connectors for Adobe’s creative suite, Blender, Instacart, Uber, and Spotify, among many others, a number that is only growing.

The practical implication is remarkable: you can tell Claude what you want, and it’ll do it. Ask it to edit a photo or a batch of photos, assemble a grocery list based upon past purchases, build a new playlist on past preferences, book an Uber – and it will open the relevant application and do it for you. No specialised knowledge required. No toggling between apps. Just intent, expressed in plain human language, executed by a meddling conductor across an ecosystem of tools you have on your computing system. Almost like an AI assistant would. Or, as per the definition, an AGI would do.

This is not a chatbot with a few extra features, but it is structurally like a conductor directing an orchestra. The agent understands your request, determines which tool – or tools – can fulfil it, dispatches the task, and returns a result while also self-correcting when needed. It is, by Gardner’s definition, applied to AI, general intelligence through the orchestration of the specialised. It is, by Wikipedia’s own definition of AGI, a system capable of matching human performance across virtually all cognitive tasks – because the tasks it cannot do itself, it delegates to a tool that can.

What’s even more remarkable, and if you will, comedic from a competitive standpoint, is the integration of OpenAI’s Codex into the Claude Code development environment via an official plugin. Two flagship AI products from rival companies – companies whose CEOs have publicly disagreed on the meaning of AGI itself – are now working together inside a single agentic workflow. The irony is rich: in trying not to call what they’re building AGI, they’ve apparently also dropped their objections to calling each other teammates.

Why Nobody Will Call It AGI

Perhaps the reluctance to use the label is understandable. AGI has been sold as a grand vision. Where’s the grandness in calling an agent, which you can compare to a conductor or a simple controller, AGI? There’s no magic to it, no way to demand $7 trillion to build a modular system that already exists. Secondly, the term AGI carries enormous baggage – existential risk debates, regulatory heat, and the near-impossible expectations attached to decades of science fiction.

If Anthropic, Google, or OpenAI announced tomorrow that they had achieved AGI, the response would be less celebration and more panic, protests, litigation, and congressional hearings. It is far more sensible, commercially and politically, to announce that your AI can now “control apps” or “integrate with third-party services.”

There is also a legitimate philosophical objection as well. Purists will argue that true AGI requires something more than coordination – genuine understanding, self-awareness, the capacity to reason about novel problems that no tool was designed to handle. Which is technically correct because the current agent-tool model has real limitations.

It is excellent at tasks that decompose neatly into known tools. When it encounters something genuinely novel – a problem no existing application was built to solve – though it tries to code and build a new tool – it can flounder. The conductor, for now, can only direct the musicians who are actually present. Not yet create one with the dexterity of a maestro who had years of practice.

The Modular Path Was Always the Sensible One

What is happening in practice vindicates the modular approach over the monolithic one. A federated system – multiple specialised AIs coordinated by an agent layer – offers things that a single monolithic model structurally cannot. When one module fails, the system doesn’t collapse. When a better specialist tool emerges, it can be slotted in without rebuilding from scratch. The reasoning process is auditable because you can trace which tool handled which sub-task and why. Hallucination risk drops because outputs from one module get cross-checked and corrected against others before final synthesis.

This is exactly how human organisations work. No single person is expected to be an expert in finance, engineering, design, and law simultaneously. You build teams. You hire specialists. You create coordination layers to ensure the specialists work toward a common goal. The AI industry has, in building the agentic layer, essentially reinvented the org chart.

Yet, amongst all the talks of modular brains or a single super-powerful one, what matters most is what works. Whether or not we agree to call agent-controlled app ecosystems “AGI,” the functional reality is that AI can now perceive your intent, reason about it, delegate tasks to specialised systems, and return an integrated result – across writing, image editing, logistics, music, code, navigation, etc. That is not a chatbot or narrow AI but a system that can assist a human in virtually any cognitive task by assembling the right combination of tools on the fly.

The supercomputer in your pocket turned from a national security asset to an everyday convenience. The same is happening with AGI even as we debate its definition. History has a way of making yesterday’s impossible feel mundane very quickly. And that isn’t bad or the best. But for the time being, perhaps even for the future, it is ideal.

In case you missed:

- Building AGI Not as a Monolith, but as a Federation of Specialised Intelligences

- Your Phone is About to Get a Brain Transplant: How Google’s Tiny, Silent Model Changes Everything

- “I Gave an AI Root Access to My Life”: Inside the Clawdbot Pleasure & Panic of 2026

- Yes, an AI did Attempt Blackmail, But It Also Turned Poet & erm.. Spiritual

- Indians Using AI For Faster, Better Work: But Psst.. Don’t Tell The Boss

- AI vs AI: New Cybersecurity Battlefield Where No Humans Are in the Loop

- AI Hallucinations Are a Lie; Here’s What Really Happens Inside ChatGPT

- Cure Every Disease: How AI is Rewriting the Future of Medicine, and Humanity

- The AI Prophecies: How Page, Musk, Bezos, Pichai, Huang Predicted 2025 – But Didn’t See It Like AI Is Today

- 95% Companies Failing with AI? An MIT NANDA Report Misread by All