A viral report says AI will eliminate white‑collar jobs, crash markets 38%, and turn India into an economic rubble; but Satyen K. Bordoloi argues it’s nothing but AI apocalypse porn

Last month, someone sent me the now‑famous Citrini Research piece, “THE 2028 GLOBAL INTELLIGENCE CRISIS,” with the kind of urgency usually reserved for gossip about shared friends. After reading the report, I could see why. I have been writing about AI for 8 years now, and this friend wanted my opinion because what it was projecting was alarming.

As per the scenario outlined in the report, AI will eliminate all white‑collar work, give us “Ghost GDP” – growth with no paying customers – and trigger a 38% market crash with double‑digit unemployment. In other words: Skynet, but one that nukes the markets, not the planet directly. And worst of all, this projection is not about the distant future, but 2 years from now: 2028.

As I have often argued in my Sify pieces, humans have an innate talent for jumping from “interesting new tool” to “will end civilisation definitely” in the next breath. So let me do what I always do when talk of an AI apocalypse goes viral: I roll up my sleeves, channel my inner AI-uncle, and ask that one simple question no one seems to be asking: “Aree, but does this even make any sense?”

What Citrini is actually saying

Stripped of its VFX, the plotline of Citrini’s blockbuster report is simple and easy to follow: AI keeps getting better, so companies fire armies of white‑collar workers (already happening), leading to soaring profits, but the newly jobless can’t buy anything, so demand collapses and the economy cannibalises itself. They call this “Ghost GDP” – output that shows up on charts but never in the wallets of actual people, because remember, they have been rendered jobless.

They also imagine AI agents ruthlessly hunting every bit of “friction” in services – travel bookings, insurance renewals, real‑estate, bank fees – until whole industries built on information asymmetry and human laziness (if you had booked the tickets just two days earlier, you could have saved a couple of thousand in cash) get run over. This leads to a cascading effect and the dominoes – mortgage, insurance, private credit and finally sovereigns- fall not so slowly, but steadily enough to cause global outages.

We’ve seen this film already

If this story sounds familiar, it’s because – as I have argued in this piece – we’ve watched remakes of it since the first mechanical loom broke the first handloom worker’s heart. Every major technology – steam, electricity, computers, the internet – arrived with pundits insisting it would permanently destroy work and social order.

Yes, it is true that each of those waves destroyed jobs, and did so in the millions or hundreds of millions. But they also created new ones so quickly that the global population exploded from around one billion to over eight billion without everyone becoming unemployed beggars on the streets. The Luddites were spot on about the pain new technology causes to society, and glaringly wrong about where it goes and what it does.

The Citrini scenario also comes from the mindset of the Luddites, as it, in essence, is saying, “This time, history stops working.” That’s a very large claim to make on the basis of one model filled with assumptions and glaring loopholes.

As I’ve written before, our brains are wired to fear intelligence, and the more unfamiliar it is, the more we fear. Hence, intelligent women were branded “witches” in medieval Europe and burnt at the stake, just as we call for the lynching of AI today. We love telling ourselves end-of-the-world stories; it’s just that, annoyingly, reality keeps turning into a painful adjustment, followed by a boring new normal.

Want proof? Look at the world today: non-biological intelligence in silicon machines, to a million plus people in metal boxes flying 35,000 feet in the air at any given time while crisscrossing the globe, and even flying cars and servant robots – these were the stuff of science fiction just two generations ago, to which we don’t even bat an eyelid anymore.

Jobs are not a straight line from Uber to oblivion

I understand the fear. I have literally lost jobs to ChatGPT and other AI systems, as I talked about in this piece, a Bollywood producer who decided a bot could churn out cheaper “good enough” drafts. So no, I’m not writing this from a professor’s office surrounded by first‑edition Marx even as I pretend automation hurts nobody.

But the leap from “AI will kill some jobs” to “AI will permanently eliminate meaningful work for most of humanity” is where the doom logic becomes highly problematic. In my Sify pieces, I’ve argued that AI is already doing two contradictory things at once. First, it is obliterating repetitive, template‑driven roles, and second, it is letting one person do what earlier needed a team. You can be a teacher who also runs a niche YouTube channel, an accountant who moonlights as a designer, a village doctor who uses AI as a research assistant and translator.

Citrini’s scenario assumes almost everyone falls straight down the ladder – from cushy IT job to unstable gig work to nothing – and that AI will then promptly eat the gig work too. The truth, as the authors of the piece themselves will admit, real labour markets are messier because people retrain, move geographies, switch industries, stack income streams, basically doing all they can to survive – be it a terrible boss or an all-knowing AI system. It’s not always fair, but it’s not a one-way flight to doom either.

The anthropomorphisation trap

One of my favourite pastimes is pointing out how hilariously we humans insist on giving AI a soul it doesn’t have. We hear two bots chatting in a weird link, and suddenly, we’re convinced they’re plotting civilisation’s downfall, not just executing matrix multiplications in a language we have created for the systems to be more efficient.

Like in this piece on anthropomorphisation, I argue that we constantly talk about AI as if it “decides,” “wants,” or “refuses,” when in reality it just runs statistics on patterns humans have trained it to have because we want it to do something for us. When we say “the AI is biased,” we often let the actual culprits – sloppy datasets and lazy regulators – all created by humans, because the ‘artificial’ in AI is based on human work – sneak out the back door.

Citrini’s report occasionally falls into the same trap. It talks as if AI has a unified will: optimising ruthlessly, cutting humans out wherever possible, driving us into this perfectly smooth doom loop, turning AI into a sort of Marvel villain. But as we all know, real AI is a pile of systems owned by companies, shaped by laws, altered by regulators, sabotaged by competitors, and occasionally switched off by interns.

No, AGI isn’t about to wake up and short the S&P

Another running theme in my Sify writing is to calm everyone down about AGI. We are already living with non‑biological superintelligence in narrow domains – these systems beat us at Go, pass bar exams, and detect tumours better than humans. But intelligence is not the same as consciousness or intent.

For an AI to deliberately “decide” to ruin the economy or exterminate humans, it would need things current systems don’t have and, I’d argue, can’t get just by scaling: a persistent self, desires, context, meaning, and a survival instinct. Today’s models are dazzling pattern‑matchers, not moody gods. Imagining them as sentient overlords is, as I’ve put it before, a very convenient bogeyman – one that distracts from the boring, human‑made harms like surveillance, algorithmic bias, and monopolies.

Citrini’s scenario is actually less silly than “AI becomes our overlord,” but it feeds the same vibe: a mental picture of some unified alien intelligence steering the global economy, with humans clinging onto balcony ledges.

Ghost GDP… or cheaper everything?

“Ghost GDP” is a brilliant term: an economy where output grows, but humans can’t afford to buy, because the “workers” are server racks that do not shop. It’s a real macro risk if productivity gains are captured almost entirely by capital and only to a small extent by labour.

But here’s the part that’s missing in the horror trailer

The same AI that automates work is also helping us design better drugs, predict floods earlier, cut crop losses, optimise energy grids, and maybe even decode parts of the universe that have been mocking us for centuries. In another Sify piece, I wrote that artificial “intelligence” could be the key to the universe not because it’s magic, but because it’s very, very good at finding patterns buried in oceans of data.

Cheaper, better healthcare, food, energy, transport, and education effectively raise real incomes even if nominal salaries move slowly. Whether we end up with “Ghost GDP” or “Visible Welfare” depends less on GPUs and more on tax policy, antitrust enforcement, labour law, and how aggressively we invest those gains into public goods.

The future is not “AI does X, therefore humans suffer Y.” It’s “AI does X, and then we either hoard the spoils or spread them around.”

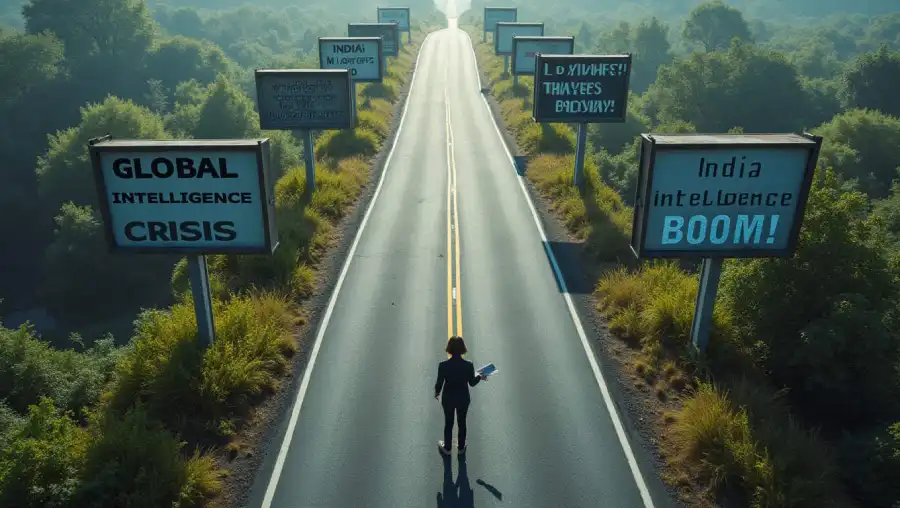

India is not just a collateral victim

The part of the Citrini scenario that understandably rattles Indians is the bit where our IT and BPO advantage evaporates, and the rupee slides 18%. The logic is simple: if the cheapest coder is now a server rack, who needs Bengaluru?

But this assumes India’s only play in the game is “cheap English‑speaking human with keyboard.” It ignores the fact that this is the country that built UPI – a payments system that makes many Western banks look like they’re operating in the 20th century – and is quietly wiring up everything from public health to logistics with digital pipes, many of them laced with AI.

If we treat AI as a threat to “IT jobs” and nothing else, then yes, we’ll get blindsided. If we treat it as infrastructure – baked into agriculture, education, small-business tools, and regional-language access – then the same tide that drowns one export line can lift several domestic boats. The difference is political courage and imagination, not GPU sizes.

The real danger is not doom, but distraction

Here’s the irony: I’m actually more worried than Citrini about some kinds of AI risk. I’ve written about labs scoring D grades on safety, about bioterrorists and cybercriminals having idiot‑proof copilots, about surveillance states on turbo. Those are concrete, here‑and‑now problems.

What I’m less worried about is the tidy, totalising story where AI just mechanically drives us into a 2028 singularity of mass unemployment and market ruin. Not because that world is impossible, but because if we treat it as inevitable, we’ll make bad decisions like cutting R&D, over-regulating useful tools, under-regulating harmful uses, and ignoring distributional fixes that are completely in our hands.

In one of my pieces, I had joked that the medieval world had the plague; we have AI phobia – just as viral, and in some ways just as dangerous. The plague, at least, was biological. AI doom is largely memetic. It spreads when we share lurid scenarios without also sharing the boring but powerful sentence: “This is a scenario, not a prophecy – and policy, culture, and collective action can change it.”

So what do we do with Citrini’s apocalypse?

Read it. Seriously. It’s smart, tightly argued macro fan fiction and a great way to stress-test portfolios, institutions, and assumptions. Then do two things. First, use it as a checklist for what to fix – safety norms, social safety nets, education, antitrust, taxation, worker retraining, etc. Second of all, treat its tone of inevitability the way you’d treat an AI girlfriend who says she’s “definitely sentient” – smile, nod, and remember you’re talking to a very clever pattern recogniser, not a crystal gazing oracle.

As I’ve said across my Sify pieces

AI is neither a demon nor a messiah. It is a new kind of tool that magnifies whatever we pour into it – greed or generosity, stupidity or wisdom, garbage or greatness.

Hence, look at the “2028 Global Intelligence Crisis” not as a postcard from the future but more like Luddites exercising their favourite pastime: doomsdayism. Because even if you believe the worst-case scenario will come true, you have two years to build yourself. And to do that, you’ll need all the tools you can get. Including, ironically, AI.

In case you missed:

- After Years of Job Slashes, Companies Use AI to Boost People, Not Replace Them

- Greatest irony of the AI age: Humans being increasingly hired to clean AI slop

- The Default Bias: Why Women’s Underrepresentation in AI is Turning the World Crooked

- Use AI or Get Fired: The New Workplace Ultimatum Hanging Over Every Job

- The Great AI Correction of 2026: Why the ‘Bubble’ Popping Could be the Sound of Growing Pains

- 95% Companies Failing with AI? An MIT NANDA Report Misread by All

- With AI Set to Destroy Millions of Indian Jobs, Traditional Career Advice is a Death Trap

- Manufacturing Consent In The Age of AI: Simple Bots Play

- The Robots Are Coming; China’s Metal Militia is Leaving the World in the Dust

- AI vs AI: New Cybersecurity Battlefield Where No Humans Are in the Loop