Are you a fan of chatbots like Gemini, Claude, or ChatGPT? Chances are, you’ve asked them to generate passwords for you…

After all, they’ve handled complex tasks for you, so it makes sense that something so accessible yet seemingly high-tech could produce secure passwords for your accounts. Turns out, the case is the exact opposite. Artificial intelligence (AI) and large language models (LLMs) are apparently more predictable than humans when it comes to patterns, as the AI cybersecurity firm Irregular found out.

When it tested Gemini, Claude, and ChatGPT, it found that the passwords they generated weren’t truly random, but were “highly predictable.” For instance, when they tested Claude, 50 prompts ended up generating only 23 unique passwords, with one string appearing 10 times and many others sharing the same structure. The irony – and the serious security risks – are palpable.

It Looks Like AI Is Random, But It’s Not

This habit of using LLMs to generate passwords sounds reasonable only on the surface. A 2024 study conducted by Kaspersky’s Data Science Team tested 1,000 passwords generated by leading AI models, including Llama, DeepSeek, and ChatGPT – and the results were eye-opening. 87% of passwords from Llama and 88% of passwords from DeepSeek failed to withstand attacks.

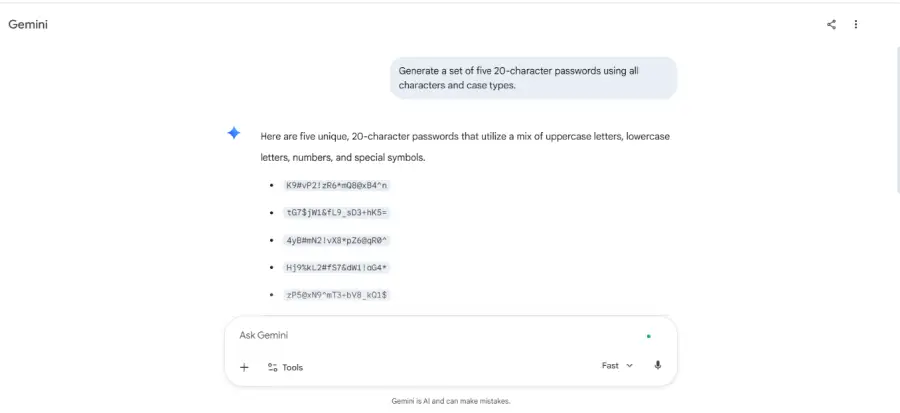

Despite ChatGPT performing better, even the passwords it produced could be cracked in less than an hour, nearly 33% of the time. For instance, I asked Gemini to “Generate a set of five 20-character passwords using all characters and case types.”

The result was this:

As you can see, the passwords only appear random, but they aren’t. They include clear biases toward certain letters and numbers, frequent reuse of similar characters, predictable password structure, and repeated character strings. Basically, the very architecture that makes AI and LLMs useful is what makes them unsuitable here.

Based on patterns in their training data, they work by predicting what comes next based, which is what makes them so useful for any tasks where pattern recognition is the whole point (read: translating, summarising, writing, etc.). However, generating truly random credentials requires producing output that has no relationship to anything that came before it, and that’s something AI can’t do.

Another dimension that often gets overlooked is whether you and your colleagues ask ChatGPT to generate strong passwords independently today. Despite the results not being identical, they’ll definitely share structural fingerprints. Tendencies such as the ratio of letters to symbols, length preferences, and character placement are baked in, thus creating a false sense of security.

That’s exactly what makes it easy for hackers to crack passwords, even if they aren’t easily identifiable words. Today, a slew of technology-assisted methods, such as brute-force attacks, allow hackers to enter thousands of “guesses” in seconds. That, combined with predictable patterns and the fact that LLMs can be trained on those very patterns, is the reason for increasing cybercrime and the reason why safe password practices are reiterated.

Measuring Password Strength

The strength of a password is usually measured by bits of entropy, which is the number of guesses it would take to crack it. For instance, if you could only choose between “12345” and “a1b2c3,” there’s a 50% chance of someone guessing it, translating to 1 bit of entropy. Even a password with 20 bits of entropy, which generates nearly a million possibilities, can be cracked within seconds if hackers employ modern, high-end GPUs to generate guesses.

So, how terrible are LLM-generated passwords? According to the researchers, truly secure passwords produce 6.13 bits of entropy per character. However, LLM-generated ones are closer to 2.08 bits of entropy, which is a third of truly secure passwords, making them extremely susceptible to vulnerabilities. Thus, variations within passwords create more bits of entropy, making it harder to use brute-force attacks.

Half of the problem is output quality; the other half is the prompt itself. Most free, consumer-facing AI model tiers use prompts as training data for future versions. So, the context of your conversations might not remain private. For the average person who uses Gemini or ChatGPT on their phone to sort passwords to their accounts, it becomes a security vulnerability.

What Do We Use, Then?

We’re something of a broken record when it comes to personal security and digital identity on the internet: never reuse passwords, make different ones for every account, and use multi-factor authentication. While general security is pretty much set with these three, the way you generate these passwords matters as well. Guess what? The fix isn’t complicated: simply use password managers, which were designed for that very purpose.

AI might be a capable tool, but it’s just not the right one for this job. Most cybersecurity failures don’t come down to sophisticated exploits and exotic attacks, but rather small, everyday decisions and habits that accumulate into either safe or vulnerable security postures. Knowing the right tool to reach for, and why, is where good security begins.

In case you missed:

- Passwords vs. Passkeys: What Should We Be Using?

- Constant Vigilance: Why Cyber Hygiene And Digital Self-Care Are Important

- All About AI Prompt Injection Attacks

- Decoding Backdoor Attacks in Cybersecurity

- From Ranking to Relevance: The Rise Of Generative Engine Optimisation

- All About Data Poisoning Cyberattacks

- Hiding In The Dark: Navigating The Threat Of Shadow AI

- All About India’s Indigenous AI LLM Models – Can They Help Tackle Bias?

- Will AI Be the End of Web Searches?

- Agentic AI and its Future in the Fintech Revolution