Forget spyder-cam in a cricket match because AI is making the impossibility of an infinite-cam possible, helping us see a single shot from an endless barrage of angles, writes Satyen K. Bordoloi

The spyder-cam, when it was introduced in cricket, gave fans a view to die for. The camera was literally in the players’ faces, taking us so close that it made us love the game even more. Yet I remember my irritation when an opposition player’s ball was caught by one of ours, but the catch was invalidated because they had touched the spyder-cam cable on its way up

Is there no way to get into the field with ball players while maintaining distance, I remember wondering? That was then. Today, it is possible. And not just one angle, but the miracle of Artificial Intelligence makes something like an ‘infinite-camera’ possible. This technology is slated to revolutionise not just sports broadcasting but also cinema and, indeed, everything that uses cameras to record.

With this, you no longer just watch cricket from your sofa, but step into it. You could choose to attach your view to the ball, experiencing the dizzying speed as it leaves Jasprit Bumrah’s fingers, follow it as it is about to be hit. Or you could run alongside Virat Kohli as he sprints to make a quick run, feeling the tension as a fielder aims at his stumps.

Or, days after the final ball of a match, you could return to the empty stadium in a digital environment, place a virtual camera exactly where the winning six was scored, and watch it from the perspective of the batsman’s rapidly beating heart.

This seems sci-fi, but it is right here, possibly coming to a cricket match near you. It is not yet the present, but the near future of sports broadcasting, powered by a wave of artificial intelligence (AI) and computer vision technologies.

Companies like Arcturus are leading this charge, using AI to discard the physical limitations of traditional production and handing the creative reins over to viewers and content creators. This transformation isn’t incremental; but a complete reimagining of how we capture, experience, and remember live sport.

Digital Twin as the Future of Sports Production

For as long as live sports broadcasting has existed, its production has required its own production and logistical planning expertise. Broadcasters would arrive at a stadium days in advance to rig miles of cabling, install heavy cameras in fixed positions, and coordinate a small army of camera operators.

The final broadcast, while polished, has so far been nothing but a curated selection from a finite set of physical viewpoints, dictated by the choices that a professional director at the command centre made.

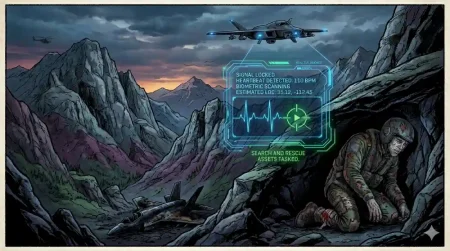

But AI is not just changing how this is done; it is shattering this model. At the forefront is Arcturus, a technology provider specialising in edge AI and vision systems. Their flagship platform, Arcturus Stage, creates a “movable hologram” of the game via a “constellation of sensors” which is nothing but a network of fixed cameras and edge computing devices all around the stadium.

Their proprietary AI system analyses a real-time visual data feed from all the cameras covering the action to construct a broadcast-quality 3D digital twin of the entire field and every player on it.

But this isn’t just simple 3D modelling. Rather, it is a dynamic, live representation of reality, as the AI tracks the skeletal movements of every athlete, the trajectories of the ball and bat, and the precise geometry of the field. The result is a complete volumetric capture of the event. For a broadcaster, this single digital twin provides endless coverage options.

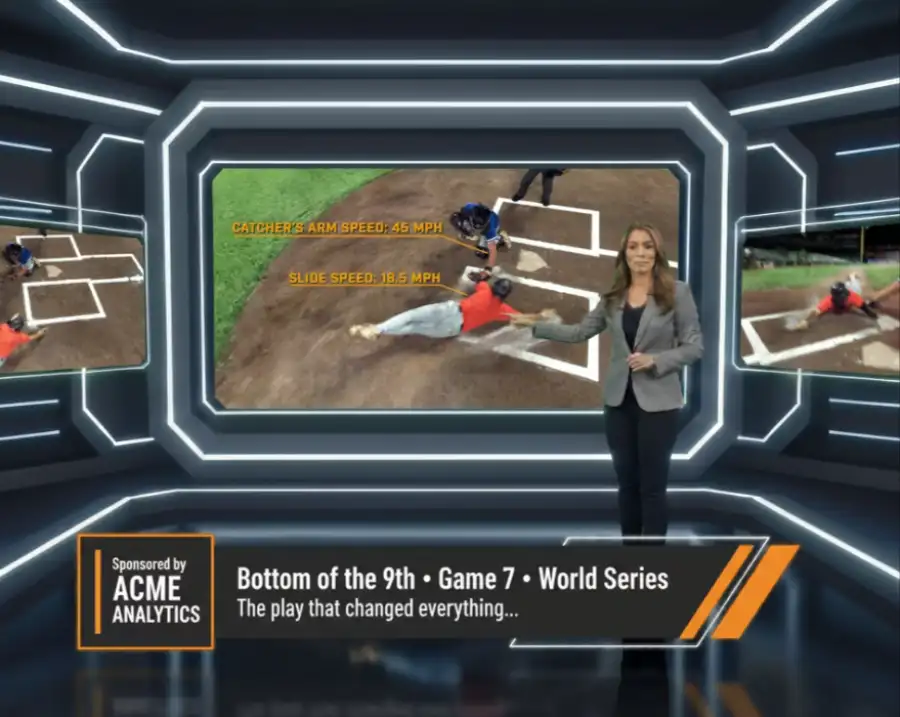

Instead of cutting between a few dozen fixed cameras (and a spyder cam), a producer can place a virtual camera anywhere within this 3D space: even in positions where a physical camera could never ever go, like right in front of the batsman’s face, as the ball is about to reach him, or following in first-person perspective right next to a fielder as he sprints to catch the ball racing to the boundary.

This technology, as you can see, is just the opposite of traditional methods. Rigging hundreds of robotic cameras to capture every angle is prohibitively expensive, technically complex, and visually cluttered. Arcturus has figured out a more efficient option as it captures the entire environment all at once, and the perspectives are generated from the data, not from another camera.

As the company has noted, the primary challenge is moving away from a technological one of “can we capture this angle?” to a purely creative one of “what new stories can we tell with this freedom?”

Beyond Sports & Into the Future of Cinema

While sports provide the perfect ‘ground’ to prove this tech, its basket of applications extends far beyond the sports arena. This ability to create a real-time digital twin of any physical space, and with such precision, opens up a universe of possibilities for, first of all, all forms of unscripted and competition television, and then for the way films can be made and viewed.

Imagine a talent show like Boogie Woogie. Instead of relying on a few well-placed cameras to capture the dancers’ unbelievable grace, this technology could capture the entire ballroom volumetrically. Viewers at home could then choose to watch the dance from the judges’ perspective, float above the dancers to appreciate the geometric patterns of their choreography, or just watch their faces and expressions.

In a competition like Fear Factor, viewers could follow an athlete’s run from a camera tethered to their body, or analyse a complex obstacle from a frozen, 360-degree angle to understand how they overcame it.

It’s use in cinema can be even more profound. Directors have spent a century and a quarter meticulously planning shots, moving actors and cameras to compose, and record, the most beautiful frame in the most perfect shot. With volumetric capture, a single performance could be filmed, and the “camera” work could be done entirely in post-production.

A director could shoot a crucial dialogue scene once, and later decide to view it from a close-up on an actor’s trembling hands, a wide shot emphasising their isolation in a room, or a low-angle shot conveying power dynamics: all from the same performance data and recording. Editing will thus not just be an act of cutting a scene, but of composing the shot as it is being edited. Perhaps you don’t even need to do this, and leave it to the viewer to do what she likes or how she watches the film.

This points to a future where the movie-watching experience will become both deeply personal and endlessly repeatable. You could watch a film for the first time to follow the plot, and then rewatch it to follow a specific character through every scene from their perspective. A single, masterfully acted scene – like in an Oscar-winning movie- could be viewed from a million different angles, revealing new subtleties and emotional depths with each viewing. It would be an experience you can’t believe is possible today, as it would dissolve the boundaries between the audience, the story, and the characters enacting that story.

New Creative Freedom of Infinite Replays

For decades, content creators have been bound by physics: the weight of a camera, the length of a lens, the need for a camera operator to stand somewhere safe. These physical constraints dictated the visual language of sports and cinema. AI removes these constraints. The camera is no longer a physical object but a data point, a perspective that can be manifested anywhere in a digital space. This not only removes any constraints to creativity, but fundamentally liberates creativity so you can make anything you fancy, not just something that is ‘allowed’ by the various limitations pressed upon the creator.

This freedom gives rise to some fantastical, fascinating possibilities. Think of a historic sporting moment, like, let’s say, India winning the 2026 T20 World Cup. In the past, that match would have a definitive broadcast, with a single, authoritative version of the game. But in the AI-driven future, that singular broadcast would cease to exist. Instead, the match would now exist as a 3D digital twin, and a “new” broadcast can be created every single day from it. A fan could spend weeks generating their own highlight reels.

They could watch a single six from the perspective of the bowler, the fielder on the boundary, the crowd, or even the stitching on the ball. A single moment would become inexhaustible, stretch as long as one’s imagination. You could watch the same match a hundred times and never see the same angle twice, ensuring you never tire of reliving the victory.

Can All This Information Cause an Overload? However, this brave new world of infinite choices is not without its potential downsides. Because in such a scenario, the central question we’ll ask is: Is so much information really needed? Is there a point where infinite choice becomes paralysing rather than liberating?

For the average viewer, the carefully curated experience that a professional director creates has immense value. The director guides our emotions, showing us the key moment, the crucial reaction, the narrative thread. If every viewer is their own director, we risk losing that shared, collective experience of watching something. The art of storytelling could be diluted into a sea of raw data.

And let us not forget the risk of “analysis paralysis.” In sports, the endless ability to freeze and scrutinise every moment could undermine the flow and instinctive beauty of a game. If every LBW call can be dissected from 50 angles in 8K resolution, will the game become more about forensic examination and less about athletic prowess?

In cinema, if a filmmaker’s carefully composed shot can be completely deconstructed and re-framed by the viewer, would it diminish the director’s artistic intent? The technology offers a god-like view, but sometimes, the magic of a performance lies in its mystery and the perspective chosen for us, something this technology threatens to upend.

From spyder-cam to infinite-cam, within a decade, technology has come so far that we no longer ask “what can we capture?”. We ask: “What stories will we choose to tell?” The technology to create an infinite universe of angles from a single shot is here. The challenge now is learning how to do so without losing the human connection that makes a story worth watching in the first place.

In case you missed:

- One Year of No-camera Filmmaking: How AI Rewrote Rules of Cinema Forever

- How India Can Effectively Fight Trump’s Tariffs With AI

- Building AGI Not as a Monolith, but as a Federation of Specialised Intelligences

- Beyond the Hype: The Spectacular, Stumbling Evolution of Digital Eyewear

- Disney’s $1B OpenAI Bet: Did Hollywood Just Surrender to AI?

- 75 Years of the Turing Test: Why It Still Matters for AI, and Why We Desperately Need One for Ourselves

- In Meta’s Automated Ad Creation Plan, A Fully Transformed Ad World

- Cure Every Disease: How AI is Rewriting the Future of Medicine, and Humanity

- Would an Electric Plane Have Reduced the Air India Crash Death Toll?

- The Verification Apocalypse: How Google’s Nano Banana is Rendering Our Identity Systems Obsolete