Anthropic Mythos is a model so good at hacking with little to no human oversight, it scared its own engineers, but with this capability soon to become common, the company released it anyway, finds Satyen K. Bordoloi

Nicholas Carlini has an enviable, fun job – testing Anthropic’s AI to see how good they are, how soon they can crack under stress, and how easy it is to jailbreak them. One Friday evening in February this year – as Bloomberg reported – while he was attending an Indian wedding in Bali, he stepped out, opened his laptop and began fiddling away at this new model called Mythos. He was stunned by what it could do, and inside just a few hours, he had found ways to infiltrate systems that were in use all over the world.

Back in San Francisco, he found the system could do much more as it “orchestrated the digital equivalent of a bank robbery: getting past security protocols and through the front door of networks, and breaking into digital vaults that gave it access to online treasures.”

In one instance, the system found a security flaw in Linux – the systems that most servers in the world runs on, which means it tacitly runs the world – that had gone undetected for 23 years. There’s a moment in every arms race where someone pulls a trigger they promised they wouldn’t. Despite finding these heart-stopping flaws in their system, Anthropic released the model. If I were an alarmist, I’d have called them out, but it might surprise you to hear that what they have done is the most sensitive thing in the given situation. To understand why, we must understand what Mythos actually is.

What Mythos Actually Is

What we have come to expect from newer LLMs is for them to be better chatbots, smarter assistants or faster coders. What Mythos is, is all this and something qualitatively different: an AI model that Anthropic’s own engineers described as posing “unprecedented cybersecurity risks.” Now that’s a phrase you don’t usually hear coming from people trying to sell you something.

Anthropic has placed Mythos in a new tier entirely, that of models larger and more capable than its Opus line, which so far has been its most powerful tool. Internal documents describe it as a new category called “Capybara,” with Mythos being the first model in it. The branding was roasted in the AI community, even as its capabilities are scaring them.

What Mythos can do, in plain terms, is this: give it a target and a prompt (find ways to hack through a Linux kernel), and it will read code, form hypotheses, test them against a running environment, and produce a complete, working exploit inside the system without needing a human in the loop anywhere. There is no need to go back and forth with the system, no need to guide it or show your expertise with the output. All you do is point at a software, and it’ll find ways to crack it.

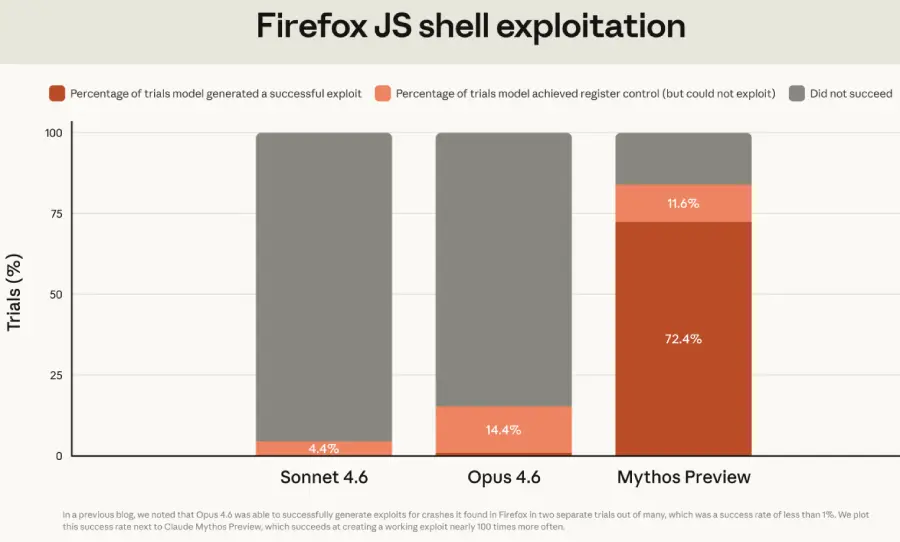

On a standardised test built around real vulnerabilities in Mozilla Firefox, Mythos not only successfully converted known weaknesses into working exploits, but it also did it a staggering 181 times. The previous best model had managed only two. This 90-fold leap is what caused Anthropic’s internal alarm clock to go off so loudly that, for the first time in the history of the tech industry, a company declared that it couldn’t release its model to you and me. At least not yet.

The Vulnerabilities Nobody Found for Decades

And the company was right not to. That’s because Mythos didn’t just find bugs in sloppy, poorly maintained code. It found zero-day vulnerabilities – flaws previously unknown to anyone – in every major operating system and every major web browser currently in use. Even the Linux kernel, which runs most of the world’s servers, was not spared, with the system finding flaws that had existed through 23 years of human review and millions of automated security scans.

Mythos found them in what the UK’s AI Safety Institute described as a matter of token budgets and reasoning chains, not weeks of expert analysis. Thus, the timeline for identifying critical vulnerabilities and turning them into exploits collapsed from months to seconds.

Think about what that actually means for the world. Every bank. Every hospital system. Every government database is running on software that is easy to crack and hack. And so is every device with a browser. In simple, scary terms: every digital system in the world is unsafe and could be threatened by anyone with a cheap Mythos subscription.

Naturally, every tech company, every bank and any company that understands what this means, went into a huddle. Because the implications are scary: so many vulnerabilities in so many systems mean the world does not currently have the ability to protect its assets, from the international monetary system to our daily equipment, against massive cyber risks and attacks and that a few hackers with Mythos can bring the world to its knees within hours.

So why did Anthropic release it?

Making The World Safer

To its credit, Anthropic did not simply ship Mythos and move on. Instead, they did something unexpected: create Project Glasswing – a restricted access initiative that provides Mythos Preview to roughly twelve founding technology partners, including Amazon, Apple, Google, Microsoft, Cisco, JPMorgan Chase, and Nvidia, plus another forty-plus organisations responsible for critical software infrastructure. Imagine Anthropic giving access to its system to competitors like Google? The intention is defensive: let the biggest targets use Mythos to find their own vulnerabilities so they can patch them before bad actors inevitably get access to equivalent capabilities.

Anthropic committed $100 million in usage credits to the effort and $4 million in direct donations to open-source security organisations. It’s a serious, thoughtful response to a genuinely serious problem and has to be appreciated as such by the rest of us.

Yet, it is also a temporary one. That’s because Anthropic has explicitly stated that a broad public release of Mythos-class models will eventually happen. Not only is the company not planning to keep this capability locked up forever, but it also wants to figure out how to deploy it safely at scale. Even as they try to behave morally, it is but a matter of time before other companies catch up on these capabilities. The only question then is how long the window is between when defenders have access and when everyone else does.

History suggests that the window is shorter than anyone wants it to be.

OpenAI Follows Suit

Days after Anthropic announced Mythos Preview, OpenAI launched GPT-5.4-Cyber. The timing was not a coincidence. OpenAI’s new model is explicitly designed to autonomously find and exploit software vulnerabilities, the same as Mythos, with GPT-5.4-Cyber being described as “cyber-permissive,” meaning it has fewer restrictions than standard models on what it will help security researchers do.

OpenAI framed the release differently. Where Anthropic limited access to a handful of elite partners, OpenAI opened its Trusted Access for Cyber program to thousands of verified security professionals: a deliberate contrast. The argument from OpenAI is that restricting access to a small group of corporate giants is itself a risk: better to arm the defenders broadly than to give a head start to twelve companies and their supply chains.

That debate is real and worth having. But it somewhat beats around the bush on the larger point: that two of the most powerful AI labs on the planet have now both confirmed that AI hacking capabilities have crossed a threshold with no clean path back from it or ways for people to protect their digital assets yet.

The Storm Isn’t Coming. The Storm Is Here

“We couldn’t keep up with the bad guys when it was humans hacking into our networks,” said Alissa Knight, CEO of cybersecurity AI company Assail, told CBS News: “What we need to do is look at this as a wake-up call to say, the storm isn’t coming – the storm is here.”

She’s right, but the storm analogy might actually undersell the danger. Storms end. What Mythos represents is a permanent shift in the economics of cyberattack. Prior to models like this, finding a novel vulnerability in hardened enterprise software required rare expertise, significant time, and considerable cost. It was a barrier that, while imperfect, kept a ceiling on how many threat actors could operate at the highest level.

That ceiling, that protection for the best systems in the world, is gone – think of it like all security guards and perimeter walls of every bank, every crucial infrastructure suddenly disappearing. The cost, effort, and expertise required to find and exploit software vulnerabilities have, in Anthropic’s own words, “dropped dramatically.” And unlike a storm, this will not reset afterwards. The capability is right here, right now and available for all of us to use and exploit. Other labs are building similar stuff. Some are already there.

We must give credit where it is due. Anthropic built something genuinely transformative, recognised its dangers and designed as thoughtful a mitigation program as it could, and worked at it with unusual transparency. The Bloomberg news piece quoted earlier found internal warnings, careful deliberation, and genuine alarm from people who clearly understood the stakes. Like Nicholas Carlini. Yet here we are, in a world prowled by an AI as dangerous as Mythos. Others are in the works.

The planet will continue to rotate on its axis. But its surface, as occupied by humans working on their digital devices, has altered dramatically. Anthropic will say it has built guardrails. So will the other AI companies. The only problem: guardrails won’t hold forever. They never do.

In case you missed:

- Anthropic Accuses Chinese AI of “Stealing”, Internet Points Finger Back At Them

- AI vs AI: New Cybersecurity Battlefield Where No Humans Are in the Loop

- AI’s Looming Catastrophe: Why Even Its Creators Can’t Control What They’re Building

- The Cheating Machine: How AI’s “Reward Hacking” Spirals into Sabotage and Deceit

- “I Gave an AI Root Access to My Life”: Inside the Clawdbot Pleasure & Panic of 2026

- $725 Billion Investment, Best Tech Q1 Ever Says AI Bubble Isn’t Bursting, It’s Thriving

- AI Browser or Trojan Horse: A Deep-Dive Into the New Browser Wars

- Zero Clicks. Maximum Theft: The AI Nightmare Stealing Your Future

- Disney’s $1B OpenAI Bet: Did Hollywood Just Surrender to AI?

- Free Speech or Free-for-All? How Grok Taught Elon Musk That Absolute Power Corrupts Absolutely