As Anthropic accuses Chinese companies of distillation, people direct them towards their own billions of dollars worth lawsuit for stealing entire libraries, writes Satyen K. Bordoloi

There is never a boring day in the world of AI. Right on the tails of the fun and fiascos at the India AI Impact Summit that kept the world hooked for a week comes another right on cue. This time, Galgotias takes a back seat as Anthropic takes centre stage.

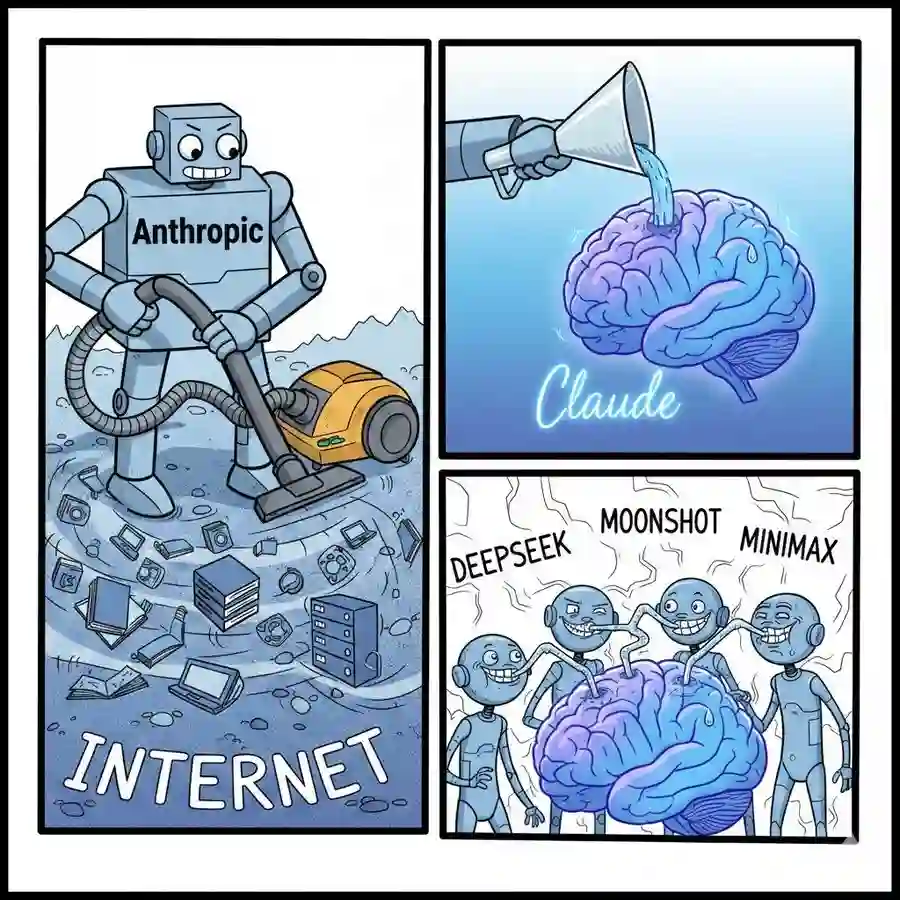

On the 23rd, the company best known for its Claude AI model posted a lengthy blog post and an X thread accusing three Chinese AI labs – DeepSeek, Moonshot AI, and MiniMax of running an “industrial-scale” heist on its prized possession.

According to Anthropic, these despicable masterminds created over 24,000 fraudulent accounts to generate over 16 million exchanges with Claude. Their dastardly goal? A technique called “distillation,” where you use a clever model’s outputs to train a cheaper, copycat version. Call it the LLM equivalent of photocopying your best friend’s notes right before the exam, without ever attending a single day of class.

However, it was the internet trolls’ response that made this a truly viral moment, with memes and Elon Musk’s viral sarcasm, holding up a huge, albeit awkward, mirror to their own corporate history.

The Case of the Curious Chinese Copycats

Let’s begin with the juicy allegations. In their blog post titled “Detecting and preventing distillation attacks”, Anthropic lays out a detailed sting operation from three labs – DeepSeek, Moonshot (creators of the Kimi model), and MiniMax, who they accuse of a systematic and sustained raid targeted at their most valuable IP.

The numbers are eye-popping. MiniMax allegedly leads the pack with over 13 million exchanges, followed by Moonshot with 3.4 million, and DeepSeek with a comparatively modest 150,000. Anthropic says they used “hydra cluster” architectures: sprawling networks of fake accounts and proxy services designed to mask their activity. If one account was banned, two more would pop up to take its place. Call it the technological equivalent of the American mall game Whac-A-Mole, but with the fate of LLMs at stake.

And what were they after? Anthropic claims they were laser-focused on Claude’s “most differentiated capabilities: agentic reasoning, tool use, and coding”. In one particularly clever gambit, DeepSeek allegedly asked Claude to imagine and articulate its own internal reasoning, effectively tricking it into spitting out a step-by-step guide on how the ‘intelligence’ in its Artificial Intelligence can be constructed by another.

Anthropic’s tone in the blog post is one of wounded betrayal and high-minded principle, arguing that this isn’t just a commercial issue but a national security threat. By stripping away Claude’s carefully engineered safety guardrails (the ones that stop it from, say, helping you build a bioweapon), these distilled models could become digital weapons of mass instruction, proliferating dangerous capabilities. It is a powerful argument that recalls the solemnity of a UN General Assembly.

The Internet’s Displays Exhibit A

Despite the seriousness of it, social media had an absolute field day with it, particularly one user, or shall we call him the first user of X, the one who goes by the name of Elon Musk.

Musk, whose own xAI is a direct competitor to all these LLMs, took the opportunity to swing a wrecking ball at Anthropic and in reply to Anthropic’s thread posted a screenshot of a Community Note and wrote, “Anthropic is guilty of stealing training data at massive scale and has had to pay multi-billion dollar settlements for their theft. This is just a fact”.

What he was linking the issue to was the rather inconvenient truth that Anthropic had recently settled a $1.5 billion lawsuit with a group of authors. Their allegation? That Anthropic had trained Claude on vast troves of copyrighted books: many downloaded from pirate websites like Library Genesis without permission.

Just pause and understand the chronology. Anthropic built a multi-billion-dollar business by, as one lawsuit put it, “stealing hundreds of thousands of copyrighted books” to train its AI, then settled the case for a record-breaking sum, only to stand up and accuse others of using their AI’s outputs to train their LLMs.

The internet, smelling blood, descended like a pack of hungry hyenas with merciless memes.

Tory Green, co-founder of IO.Net, perfectly encapsulated the sentiment: “You trained on the open internet and then call it ‘distillation attacks’ when others learn from you. Labs that like to preach ‘open research’ suddenly cry about open access. This is what happens when intelligence sits behind a centralized api with subsidised tokens”.

The accusation was devastating. Anthropic, and to be honest, every AI company had essentially scraped the entire written history of humanity—the “open internet”—to build its model. In court, they had argued that this was “fair use,” a transformative act necessary for progress. Yet, when someone else tries a similar tactic, by paying for API access, no less, to learn from their model, it’s suddenly an “industrial-scale attack” that threatens the free world.

The Tale of Two Thefts

To be fair, Anthropic is trying to cling to nuance; on their blog, they state that distillation itself is a “widely used and legitimate training method” and that they do it themselves! They argue that the crime isn’t the technique but the illicit nature of it, carried out via fake accounts, terms-of-service violations, etc.

Yet, it is a tough sell for a public that just watched them settle a billion-dollar lawsuit for doing something far more blatant: copying actual copyrighted text from authors without paying a dime. As one X user put it, “When you guys train your model by bombarding others for free of cost, it’s fine. But if others are training by paying your model, it’s illegal? Unethical?” The optics of it, naturally, are devastating. A company that is a beneficiary of the ultimate distillation attack: humanity’s entire intellectual output distilled into a chatbot’s training, is now shocked to find that someone would want to do the same to them.’

The Meme War and the Bigger Picture

The fallout has been a gift to the internet’s meme-makers and black-humourists. One created a meme with 3 panels: “Never ask a woman her age, a man his salary and an AI company where they got their training data.” Of course, Musk was the first, writing: “How dare they steal the stuff Anthropic stole from human coders??” He also called the company: MisAnthropic. Another meme had the statement: “You’re trying to kidnap what I’ve rightfully stolen.”

It also brings to the fore a fresh wave of mockery of the ongoing spat between OpenAI’s Sam Altman and Anthropic. Just a few weeks ago, Altman threw a very public, 420-word “tantrum” (as the BBC so aptly described it) over a Super Bowl ad where Anthropic hilariously mocked the idea of ads in AI. And at the India AI Impact Summit recently, Altman and Anthropic CEO Dario Amodei famously found themselves next to each other, and refused to hold hands. And now, it is Anthropic’s turn to throw the tantrum.

Yet, beyond the fun memes, the key thing for us to remember is that this comes at a time when Anthropic is in a fight with none other than the US government, with War Secretary Pete Hegseth threatening to not only terminate its $200 million contract, but designate the company a “supply chain risk”, a label typically reserved for foreign adversaries. This would effectively blacklist them from any government-related business.

Posting that the company faces a threat from China at this time serves two purposes: one is to show how good they are, that China is trying to plagiarise them. And two, in raising the patriotic bugle, the company want to appeal to the MAGA heart inside both Trump and his base. This has evidently not worked, as Anthropic has rolled back some of its safety features, which it says have nothing to do with the spat with the government. But not many are buying that.

The Uncomfortable Truth

Beyond the memes and the mockery, though, there are serious points to be made. Anthropic’s complaint about distillation attacks is not entirely without merit. The use of thousands of fake accounts to systematically extract a model’s capabilities for commercial gain is a shady practice that violates terms of service, not to mention international law. The national security concerns about safeguards being stripped away are valid talking points, even if they’re conveniently crafted to serve their own interests.

This isn’t just about distillation. It’s about a young industry that built itself by standing on the shoulders of giants: authors, artists, programmers, and every human who ever posted anything on the internet, and is now furiously trying to kick away the ladder before anyone else can climb up. Anthropic wanted to be seen as the sheriff protecting the town from outlaws. Instead, they’ve been outed for their hypocrisy. But beyond that, the issues are real. Will they ever be addressed? Only time will tell.

In case you missed:

- AI Hallucinations Are a Lie; Here’s What Really Happens Inside ChatGPT

- Yes, an AI did Attempt Blackmail, But It Also Turned Poet & erm.. Spiritual

- The Cheating Machine: How AI’s “Reward Hacking” Spirals into Sabotage and Deceit

- Anthropic was afraid of their latest AI Mythos: they released it anyway, to the panic of the world

- How Does AI Think? Or Does It? New Research Finds Shocking Answers

- AI vs AI: New Cybersecurity Battlefield Where No Humans Are in the Loop

- AI’s Looming Catastrophe: Why Even Its Creators Can’t Control What They’re Building

- The B2B AI Revolution: How Enterprise AI Startups Make Money While Consumer AI Grabs Headlines

- Free Speech or Free-for-All? How Grok Taught Elon Musk That Absolute Power Corrupts Absolutely

- Pressure Paradox: How Punishing AI Makes Better LLMs